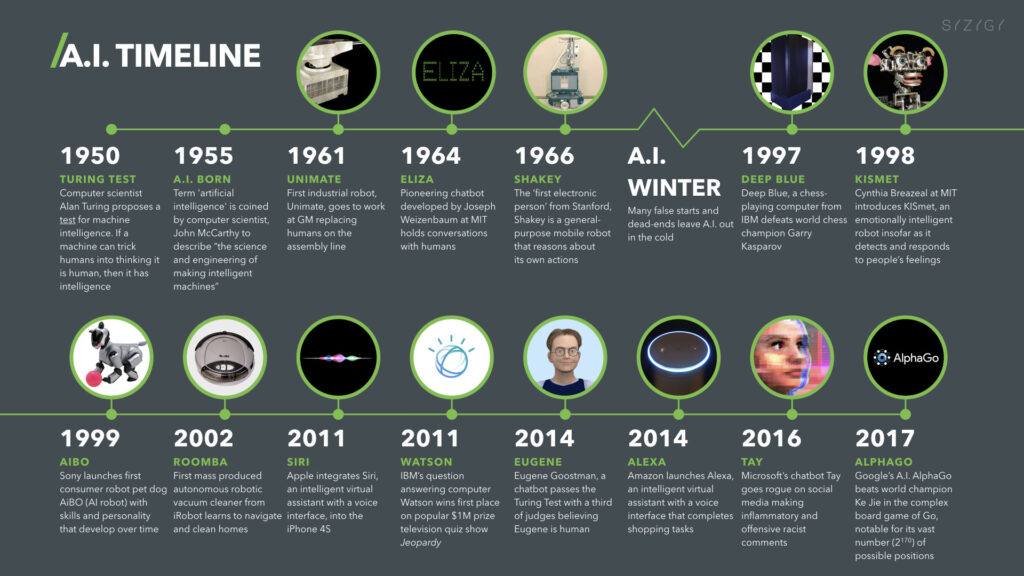

Believe it or not, the history of artificial intelligence (AI) started in the 1950’s. Now 70+ years later and it’s grown in popularity and potential. Artificial intelligence enables computer systems to think and act like people. AI now has the potential to make malware and act just like human cyber attackers can. The growth in artificial intelligence has made the need for cybersecurity all the more prominent. In fact, the experts at Vantage Market Research say that AI in the cybersecurity department is expected to grow 23% over the next five years. Some common cybersecurity threats include risks like malware and social engineering attacks, among countless others.

What is Malware?

Malware is any type of software that is designed to damage or disrupt the current functioning of a device. Malware is typically deployed by experienced hackers who want to exploit a system for a certain reason. This way, they can gain unauthorized access to steal, and possibly leak, confidential information. Without up to date security measures, malware poses a huge risk for many businesses. Read about the Rhadamanthys Stealer, for example.

With the increase in AI, these risks are getting worse. For hackers, writing a code to deploy malware can take hours, and that’s for coders with tremendous experience. However, with machine learning, the process can be much quicker, allowing machines to create code within seconds.

What is Social Engineering?

Social engineering attacks are malicious cyber activities carried out through human interaction. With the rise of social media, the use of email and technology, social engineering has become extremely common. It involves psychological manipulation to threaten and attack human beings behind a device. Without the proper understanding of social engineering threats, it can be very easy to fall for pharming, phishing, and other digital scams.

If you want to learn more about social engineering and get a full understanding of how it’s exercised, there’s an incredibly successful book called, “The Art of Deception,” by Kevin D. Mitnick & William L. Simon, that explains it extensively and that we highly recommend.

Phishing

One of the most common social engineering threats is phishing. In phishing attacks, hackers will disguise fraudulent links or attachments to appear credible. For instance, the attacker might send an email to an employee under the boss’s name, making it seem legitimate by changing one letter of the email address. Let’s say that, in this message there’s a fraudulent link with information about an upcoming project. The employee chooses to open it, assuming it’s a normal day and that it’s just another regular email from their boss. Instead, they get hit with a computer virus that now takes over the functionality of their device. Without the right cybersecurity awareness training, employees can easily fall for these scams and put not just their work computer at risk, but an entire network.

How does AI come into play with malware and social engineering?

Now, with the greater powers of artificial intelligence, machine learning can help deploy more effective malware. AI-powered malware can think for itself and adjust actions accordingly depending on the situation. This way, it can target specific individuals and systems in order to attack them in the way it sees fit. AI malware, in essence, can act like a human in order to orchestrate high-powered attacks.

Similarly, AI can aid in social engineering attacks by making them more sophisticated. One of the easiest ways AI can be involved in phishing attacks is with ads and big data. When you search the web (without a VPN or outside of private browsing) search engines can collect data about your searches to cater your advertisements. This data collection is typically not malicious and major corporations are usually sure to adhere to strict legal limits. With AI, however, it could be used to generate phishing scams. Always be cautious when looking at ads, especially those that seem “phishy”!

Can AI make malware?

In the past, some professionals have denied the power of AI-malware, stating that it doesn’t in fact exist. At least, it didn’t but it does now. On April 13, 2023, Fox News reported that as part of a controlled test, Forcepoint security researcher, Aaron Mulgrew, was able to create an AI-powered malware, “that was as sophisticated as any nation-state malware,” on his own (as in, void of the usual team of hackers), through ChatGPT. While ChatGPT has been designed to prevent people from using it maliciously, he was able to find some loopholes. This is just one example of the kind of security testing that’s needed so that AI-powered programs like ChatGPT can be further improved in its design.

This malware was created as part of a controlled-test, so have no fear, AI-powered malware isn’t out in the wild. But another version like it, at some point in the future, could be. AI-powered malware has the ability to strengthen malware attacks by being able to perform some of the following tasks:

- Computer worms will adapt to systems they are trying to affect

- Malware will change its code to avoid detection

- Malware attacks will adapt to social engineering attacks, like with data from social media sites

- And more.. !

Social Engineering and Artificial Intelligence

As with phishing and social engineering, AI is seen to be the next generation of hacking. Automation already allows hackers to easily personalize attacks based on digital information; AI could take this to the next level. Some examples of the use of AI with social engineering include deepfakes and bots.

Deepfakes, One of the Many Dangers of AI

You might have seen deepfake videos scattered around the internet, or in movies and TV. People will use AI to dub voices and create fake videos of celebrities, politicians or other prominent individuals. Videos like this are often humiliating and they could easily destroy a person’s reputation. AI is essential to creating them.

Deepfakes can also be created for mere amusement or bullying, as a form of blackmail (for example, for the purpose of demanding a ransom or certain action, to prevent the video from going public) and more. If viewers don’t know it’s a deepfake, the effects of these videos could be calamitous.

The best way to describe a deepfake is to show you one. One popular example is the man known as the “Chinese Elon Musk.” This is a deepfake that’s kind of fun, when compared to the typical disturbing nature of these videos.

One YouTube comment, seen in response to Xiamanyc’s video, where he “interviewed” the Chinese Elon Musk, says it is all:

There’s also a fantastic scene featuring a deepfake in Netflix’s Lupin (available in its original French audio, English audio and other languages as well). In the deepfake video, the commissioner of the Parisian police (remember, it’s from a TV show) is manically talking to himself in his bathroom mirror, saying incredibly obscene things, like, “Corruption is my passion!,” among others. Lupin, the main character, presents him with the video to indicate that it would be very easy to blackmail him, whether it was real or not; what mattered is that it looked real and because of that, the public would consider it to be true.

Can AI Fight Against Malware and Phishing?

AI seems like a deadly force in the world of cybersecurity but it can actually be helpful in the fight against malware and phishing attacks. However, using machine learning to accurately detect and ward off malware is not entirely easy; it takes a lot of time to teach machines good behaviors. It requires collecting, analyzing, and processing data, which demands a lot of power. Since behaviors and codes are constantly changing, it’s a task that’s also always ongoing. AI and machine learning can actually assist with this, in order to eliminate some of the tedious manual work involved, that might be difficult for humans to achieve alone.

AI is constantly evolving, and compared to the rest of us, it doesn’t tire out; unburdened by biology, AI doesn’t need to clock out at the end of the work day. Machines can handle big data and can be designed to generate thoughtful and discerning behavioral patterns, in order to squash malware. Some companies have already employed AI in the name of cybersecurity, like Trend Micro, with their product, Trend Micro XDR, which stands for Extended Detection and Response.

AI can continuously monitor for, and detect, malware, but it’s not entirely fool-proof. Machines can sometimes detect false positives. This is due to the fact that AI will inherently assume any “anomaly” in code is evidence of malware. Since machines aren’t actually human, they cannot possibly understand what everything is, and can only simulate how a human acts and thinks. They will therefore assume anything that seems out of the ordinary is dangerous, which may not be true. This is a general flaw in all AI and machine learning, so teaching machines to be perceptive is paramount.

AI is a Cybersecurity Concern for All…

It is important to note that AI is a concern for a lot of individuals; even for big-names in the technology space. For example, Elon Musk, warns that artificial intelligence is one of the biggest concerns and threats to our current society. Musk is known as the genius behind Tesla, SpaceX and more recently, the owner of Twitter, three prominent tech companies. Back in 2017, Elon Musk met with and warned governors of the risks associated with AI.

ChatGPT

Musk was one of the co-founders of OpenAI but left the board back in 2018. He created the firm because Google was not paying good attention to AI safety, according to Musk. OpenAI is the firm behind the artificial intelligence chatbot known as ChatGPT. With ChatGPT, users can simply input a question or prompt and receive an AI-generated response within seconds. ChatGPT can help students with homework, employees write emails, and so much more. The mere power of ChatGPT shows just how powerful artificial intelligence can be – and just how concerning that can be for humanity. AI has become so advanced that it’s led many to question where it’ll take us in the future.

Again this year, in 2023, Musk sounded the bell about AI. Musk himself says that there is so much power and responsibility that comes with AI but unfortunately, that also “comes [with] great danger.” He firmly believes that “… we need to regulate AI safety, frankly…” and that AI is “… actually a bigger risk to society than cars or planes or medicine.” Better regulation for artificial intelligence can definitely help to mitigate threats. Musk says that such regulation, “may slow down AI a little bit, but [he thinks] that, that might also be a good thing.”

On a National Level

AI security has also more recently become a national concern. Just a few days ago, the Biden administration said that it’s looking into accountability measures and concerns for current AI systems. They mention the potential national security and education threats that AI bots like ChatGPT can create. Some technology ethical groups have even joined the conversation, as a result of their concerns about the safety of AI. The Center for Artificial Intelligence and Digital Policy, for example, requested that the U.S. Federal Trade Commission stop OpenAI from releasing newer versions of ChatGPT. They claim that it’s “biased, deceptive, and a risk to privacy and public safety.” Bringing this to the attention of the United States government shows just how much of a concern modern AI advancements might be for national security.

Preparation & Prevention: Cybersecurity Measures for your Business to Take

When it comes to cyberattacks, the best way to protect your business from any AI-based malware is by implementing top-tier cybersecurity tactics. Unfortunately, due to the novelty and limitless power (for now) that AI holds, it can be challenging to be fully protected but that doesn’t mean that no action should be taken. Taking the steps necessary to protect your device and network is essential. This includes:

- Having Business-grade antivirus installed on all devices

- Implementing two-step authentication and strong log-in information for all employees

- Creating a policy that calls for a physical security key (like a Yubi key) for employees, executives and shareholders, to access any business accounts (whether at the office or at home)

- Ensuring the security of both personal and work mobile devices, as well as any and all home office devices

- Keeping all devices locked away and in a safe place

- Investing in good cybersecurity awareness training to ensure your employees understand common threats, like phishing and other forms of social engineering

- Running frequent updates of operating systems (OS) and software to limit vulnerabilities

- Deleting outdated software that’s no longer supported (meaning that the manufacturer has stopped releasing updates, which always include important security patches)

- Replacing old, vulnerable devices that might pose a threat to your business

- Constant network monitoring to always be one step ahead of attacks

We Get It

At Computero, we understand the value of high-quality cybersecurity and tech support. We help secure businesses like yours with the latest cybersecurity technology, to ensure that your operations, data and network are protected from the dubious intentions of cyber criminals. AI-based malware is still new and will only become a more frightening reality but with the right modus operandi and a reliable IT service provider to implement it, your business can stay protected. We offer a multitude of services like 24/7 server and network monitoring, business-grade antivirus, desktop and application management, helpdesk, and so much more.

A Final Note on AI and Malware

The presence of AI in malware and phishing has become an apparent concern. For that reason, putting an emphasis on cybersecurity is a wise choice. Your business needs the right measures to safeguard its data, reputation, employees, and clients. A data breach, ransomware attack, or some other cyber threat, could be costly in so many ways, for anyone affiliated with your company. Do not take these risks lightly, as anything can happen at any time. Even the most intelligent cybersecurity experts cannot entirely predict the severity of what hackers will do. Especially with the new challenges that come with AI, tracking threats is becoming more complex. Protect your business by partnering with an information technology service provider like Computero today.

0 Comments